AI is no longer a future consideration—it’s already reshaping your Salesforce environment.

From Agentforce to Einstein GPT to a growing wave of third-party AI integrations, today’s Salesforce orgs are rapidly becoming intelligent, autonomous ecosystems. But while these technologies promise faster workflows and smarter decisions, they also introduce an exponential increase in risk—especially when it comes to data exposure, process breakages, and compliance failures.

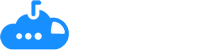

The problem? These new risks aren’t entirely new—they’re amplifications of long-standing issues like bad data hygiene, poor access controls, and unmonitored integrations. In a typical Salesforce org with 50+ connected applications, most teams don’t have clear visibility into which apps are accessing sensitive data, what they’re extracting, and who’s responsible.

In our latest Sonar webinar, Jack and Brian Olearczyk explored why Salesforce Shield Event Monitoring—paired with proactive connected app monitoring—has become a must-have defense strategy in the AI era. They break down the biggest risks, the gaps in current governance models, and what security and ops teams can do right now to protect their data while enabling innovation. Watch the full webinar here to dive deeper into the discussion.

The Hidden Risk: Connected Apps You Aren’t Monitoring

Today, every Salesforce org is connected to a constellation of tools: Slack AI, ZoomInfo, Gong, Salesforce Code Builder, meeting transcription tools, marketing automation platforms, and AI-powered analytics. These apps interact with your CRM via OAuth tokens, pulling and pushing sensitive customer and business data, often without centralized oversight.

According to Sonar’s internal analysis of hundreds of customers, the average org has 57 connected apps. That’s 57 separate data access points—many of which are not fully governed, logged, or even known to platform owners.

The implications are serious:

- Apps may retain more access than necessary after onboarding.

- OAuth tokens might remain active after a tool is deprecated.

- AI tools can extract and store sensitive fields like social security numbers or health data if not properly monitored.

Most security teams discover these risks only after a process breaks or data is exposed—long after prevention is possible. Monitoring connected apps isn’t a “nice to have.” It’s a necessity.

Why AI Exponentially Increases the Impact of Misconfigurations

With tools like Agentforce, AI is no longer just querying Salesforce—it’s analyzing data, surfacing insights, writing to records, and even triggering workflows autonomously. That means a single misconfiguration can lead to wide-scale data leakage or even destructive automation.

Before AI, a misconfigured field or profile might result in minor workflow issues. But now? Those same missteps could result in:

- LLMs making inaccurate decisions

- Sensitive data being surfaced unintentionally

- Agents writing incorrect or destructive data back into your system

That’s why monitoring isn’t just about who’s connecting—it’s about what they’re doing in real-time.

Without monitoring in place, that same access might remain unchecked for weeks—or worse, forever.

4 High-Impact Risk Categories You Must Address When Integrating AI & Connected Apps to Salesforce

Integrating AI agents and third-party apps into Salesforce introduces new layers of complexity—and significantly magnifies existing vulnerabilities. From data exposure to automation risks, these issues demand a proactive security strategy.

In the webinar, Jack and Brian outlined four core risk categories every team should prioritize as they scale AI and connected app usage across their Salesforce environment. Here’s what to watch for:

1. Poor Data Quality → Bad AI Output

AI models like Einstein GPT and other LLM-powered agents rely entirely on the quality of data in your Salesforce environment. If that data is inconsistent, outdated, or misclassified, the AI will amplify those issues—not fix them. What used to be a “back-end” problem buried in fields rarely used by end users is now front and center in automated decisions, summaries, and customer-facing recommendations.

What once was harmless noise in your org becomes high-stakes insight delivered at scale. Inaccurate records, duplicated values, or incomplete data models aren’t just technical debt—they’re risks when AI agents start making decisions on top of them.

These risks occur because:

- AI agents query across your full data model—not just what’s actively in use.

- Fields with inconsistent values can skew AI outputs and lead to misleading insights.

- Poorly documented fields may be misinterpreted by AI and injected into workflows without context.

2. Misconfigurations Become Exponentially Dangerous

Misconfigured profiles, permission sets, or overly permissive OAuth scopes have always been problematic—but AI supercharges the consequences. In a manual environment, a misconfiguration might go unnoticed for weeks. With AI agents, those access levels are executed immediately and at scale.

During the webinar, Jack shared how agents—even those technically “within their scope”—could still create destructive outcomes if access wasn’t tightly scoped or configurations weren’t regularly audited. Vulnerabilities can occur due because:

- AI agents often operate autonomously and can write to records based on flawed logic or insufficient oversight.

- Overly permissive tokens can give third-party tools access to sensitive or regulated fields.

- Even internal users can unintentionally introduce risk by enabling AI-powered tools without proper vetting or sandbox testing.

3. Data Leakage & Shadow Integrations

AI’s promise of productivity leads to rapid experimentation—and often, new tools are connected to Salesforce without the knowledge of security or ops teams. These unsanctioned integrations, especially AI-enabled tools like summarizers, note-takers, or Chrome extensions, may start extracting data into off-platform storage, completely bypassing your internal governance workflows.

This “shadow AI” layer is extremely difficult to track without automated visibility into connected apps, OAuth token activity, and field-level data usage.

This is critical because:

- Sensitive data can be sent to external platforms without encryption or masking safeguards.

- Many AI tools retain or store data in systems you don’t control—violating internal compliance policies.

- Legal and regulatory risks compound when third-party vendors are not properly vetted under frameworks like GDPR, HIPAA, or PCI DSS.

4. Compliance Complexity Grows—Fast

As AI tools grow more powerful, they also grow more invasive—requiring access to deeper layers of your CRM to deliver value. That means field-level governance, masking, and classification become urgent priorities, not long-term roadmap items.

Salesforce Shield Event Monitoring offers critical visibility into system activity, but without structured data classification and connected app monitoring dashboards layered on top, compliance teams are left without the context they need to take action.

Striking a balance between protecting sensitive data while making it useful is critical because:

- Field-level access is now the standard for compliance, especially with AI tools pulling data dynamically.

- Sensitive information may be surfaced or shared unintentionally by AI-powered outputs (e.g., Slack summaries, chatbot responses).

- Compliance frameworks increasingly demand clear documentation of who accessed what data, and when—which isn’t possible without unified event tracking.

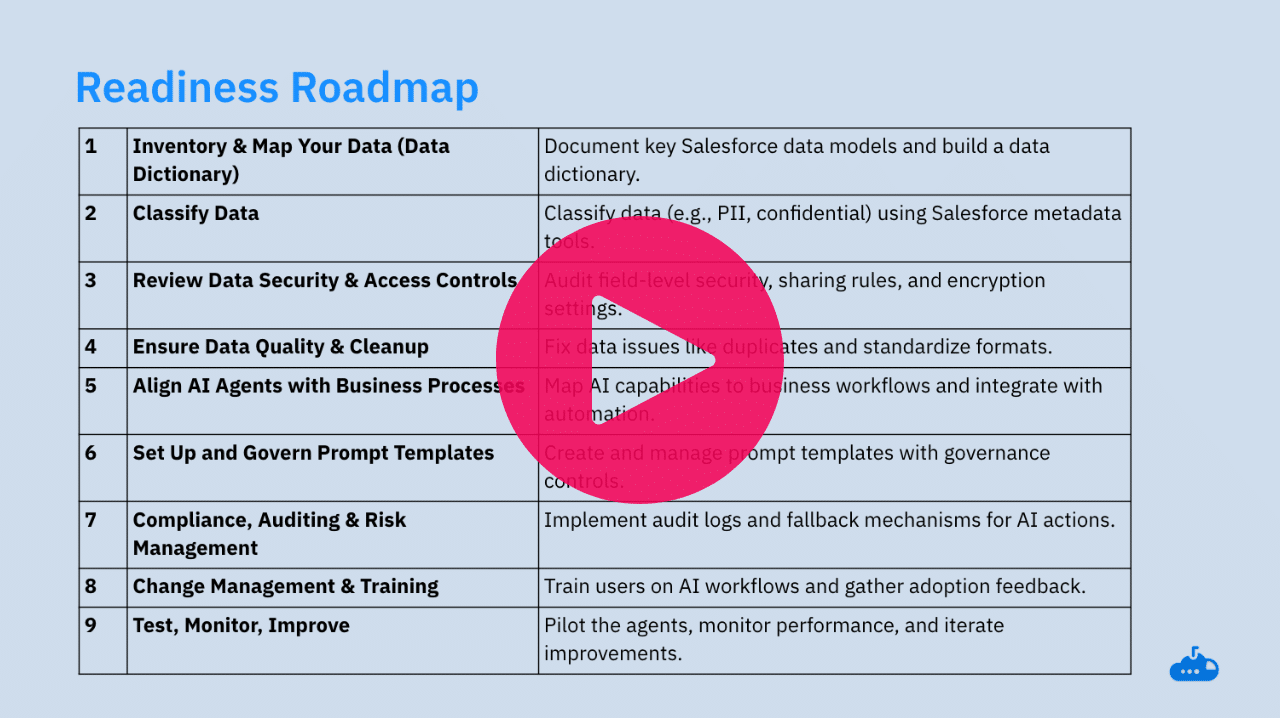

What Security Readiness Looks Like in an AI-Driven Org

Understanding the risks is only the beginning. With AI accelerating the pace of change—and expanding your risk surface—security teams need to evolve their Salesforce governance models to be real-time, adaptive, and automation-ready.

In the webinar, Jack emphasized that preparing your Salesforce org for safe, scalable AI adoption doesn’t require a complete overhaul. It’s not about boiling the ocean—it’s about starting with core security fundamentals that provide maximum visibility, control, and agility. These foundations allow your teams to embrace innovation without compromising integrity.

Here’s what that looks like in practice:

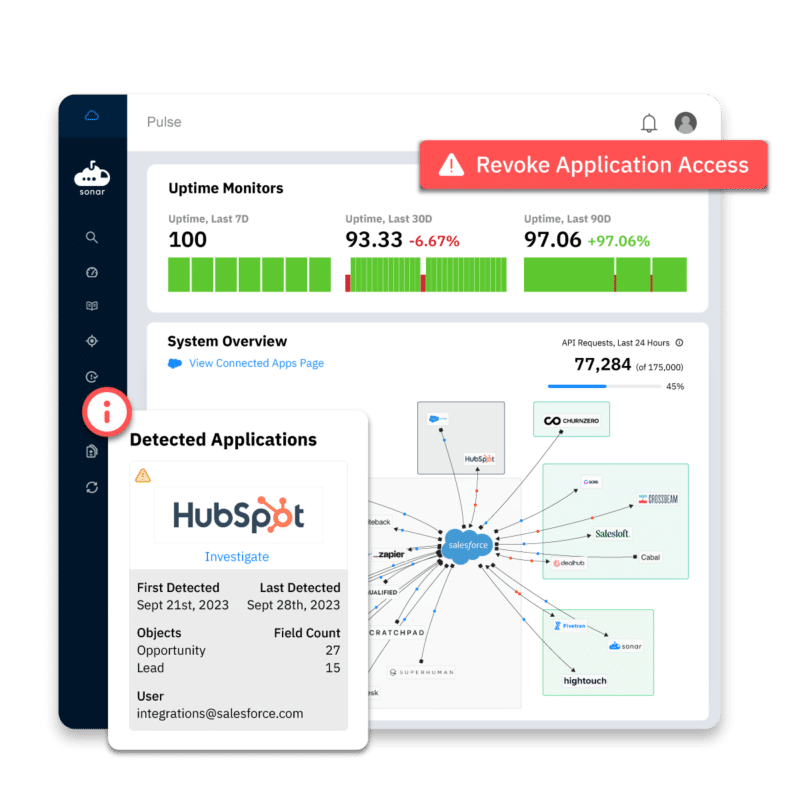

1. Establish Real-Time Visibility into Connected Apps

The foundation of AI security in Salesforce is knowing exactly which applications are connected, what data they can access, and how they’re behaving. This visibility should be constant—not limited to quarterly audits or manual exports.

Many teams are surprised to discover dozens of apps connected to Salesforce that were never formally reviewed or documented. As new AI tools are quickly adopted by business units, the number of access points—and potential risks—grows rapidly.

Real-time visibility means:

- Seeing every app with an active OAuth connection

- Understanding which fields are being accessed or modified

- Identifying the business owner or team that initiated the connection

- Monitoring token behavior, refreshes, and anomalies

Sonar’s Connected App Dashboard provides this visibility out of the box—giving teams a clear picture of their Salesforce integration landscape without requiring Salesforce Shield to get started.

2. Classify and Understand Your Data—Automatically

AI doesn’t just amplify your workflows—it also amplifies your data exposure. That’s why understanding the sensitivity and purpose of every field in your Salesforce environment is now a critical requirement.

But manually tagging fields, maintaining a data dictionary, or chasing down context from different teams is time-consuming—and often inaccurate.

That’s where AI-assisted data classification comes in. Sonar uses intelligent automation to:

- Scan your Salesforce schema and auto-identify PII, PHI, and financial fields

- Highlight fields actively used by connected apps and AI agents

- Surface data model inconsistencies or duplication across objects

- Automatically keep your data dictionary updated as your org evolves

With field-level context, your team can enforce policies based on what data is, not just where it lives. This leads to smarter security controls, better compliance reporting, and more confident AI adoption.

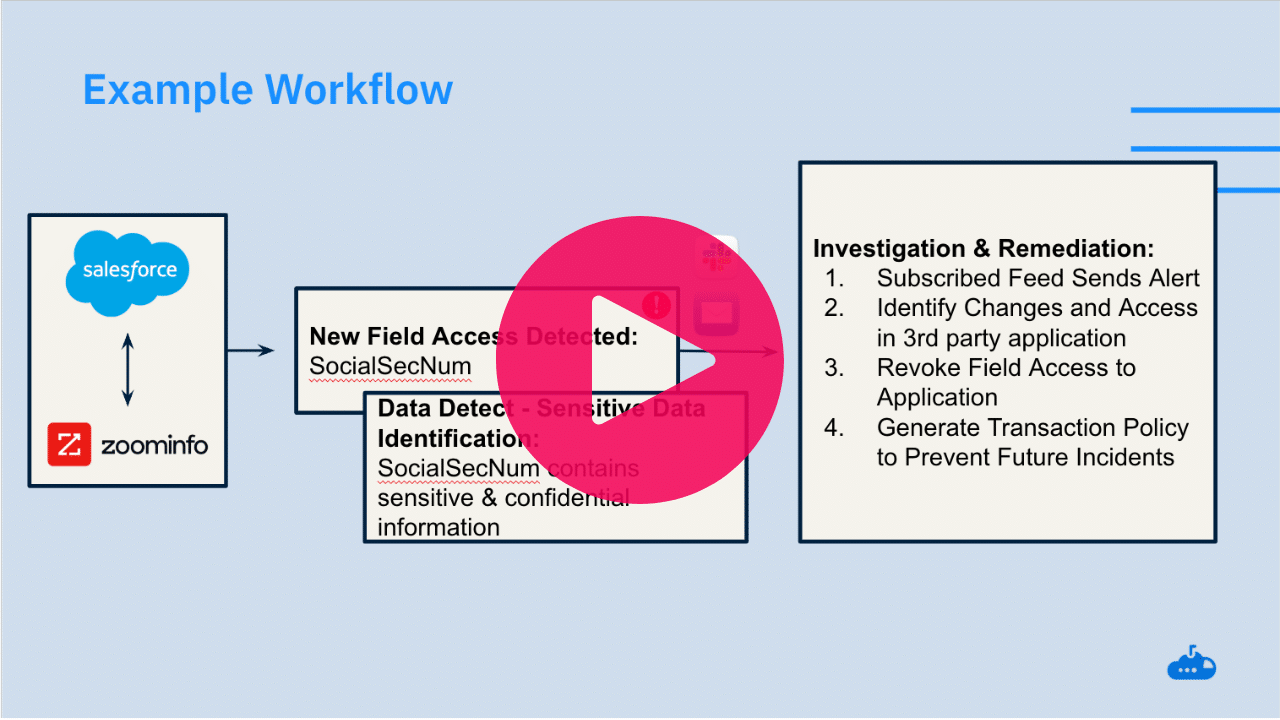

3. Monitor and Respond to Integration Behavior

Visibility is the first step—but awareness without action doesn’t reduce risk. Once your connected apps and data classification are in place, you need a system for monitoring behavioral changes and flagging anomalies before they become incidents.

Salesforce Shield Event Monitoring gives you the raw data. Sonar turns it into actionable intelligence.

This includes:

- Real-time alerts for unapproved app connections or token activity

- Change detection for field-level access patterns and API volume spikes

- Identification of inactive users tied to active integrations

- Automated correlation between connected apps and the sensitive data they’re touching

Sonar adds interpretive layers—like impact analysis, AI-generated remediation paths, and visual mapping of integrations—so teams can act fast, confidently, and at scale.

4. Build Guardrails Around Agent Behavior

As AI agents take on more autonomous roles inside Salesforce—writing to records, launching workflows, and handling sensitive customer data—it’s essential to establish governance controls specific to agent activity.

Treat AI agents like internal users: they need scoped access, behavioral monitoring, and lifecycle management. Without intentional design and oversight, agents can easily operate outside of your expectations—or worse, within permissions that haven’t been fully reviewed.

Sonar enables teams to:

- Align agents with documented, auditable business processes

- Apply least-privilege access policies to restrict unnecessary data exposure

- Monitor agent-initiated actions such as case creation, field updates, or workflow triggers

- Flag unexpected or excessive write operations that signal automation gone wrong

This helps ensure your AI agents operate as extensions of your strategy, not loose cannons with system-wide access.

This helps ensure your AI agents operate as extensions of your strategy, not loose cannons with system-wide access.

Conclusion: The Future of Salesforce Security Starts with Visibility

AI is moving fast—and your security model needs to move faster. As connected apps and autonomous agents become foundational to how your teams sell, service, and operate, the risk of data exposure, process failure, and compliance violations scales with them.

But this isn’t a reason to slow down. It’s a reason to evolve your Salesforce governance strategy.

By combining Salesforce Shield Event Monitoring with Sonar’s purpose-built visibility, classification, and monitoring platform, you can confidently adopt AI while maintaining control over your most sensitive data and processes. From day-one discovery to long-term oversight, Sonar helps you secure what matters—without slowing your business down.

Ready to see where your risks are hiding?

👉 Watch the full webinar on Salesforce Connected App Monitoring and AI Risk.

👉 Try Sonar free and see your connected app landscape in minutes.